Teachers have been observing a specific kind of student behaviour since 2023 and have struggled to put a name to. Students complete their assignments. And then a week later they do not remember anything at all from that homework.

By late 2025, 62% of middle school, high school, and college students in the US were using AI for homework, up from 48% seven months earlier. At the same time, teachers report a ‘digitally induced amnesia’ where students cannot recall the information they submitted because they never committed it to memory.

This is not primarily a cheating problem. The students see this as being efficient, since the entire narrative around AI is about efficiency and getting the right solution. In the traditional grade-centric education system that emphasises on output rather than understanding, they are right.

But this problem is more structural: homeworks, assignments, and tests are fundamentally not about the outcomes and results. Minas Karamanis captured this quite accurately in the recent essay: The Machines are fine, I am worried about us.

We have built an entire evaluation system around counting things that can be counted, and it turns out that what actually matters is the one thing that can’t be...What matters is the process of getting them: the development and application of methods, the training of minds, the creation of people who know how to think about hard problems. If you hand that process to a machine, you haven’t accelerated science. You’ve removed the only part of it that anyone actually needed.

The education process is as much about the grind, the travails and tribulations, the struggle to get there as it is about the outcomes if not more.

AI has now decoupled learning and comprehension from the process of getting results even further. Answers are now free, instant, indistinguishable from one student to another. They are free from all the subjective influences, but they also leave behind no traces of struggle.

Before AI, another industry commoditised answers without attracting criticism: the publishers of academic answer-books. For generations, they built the crutches for the students who were stuck in the homework assignment loops and helped them “complete the task.” Under the garb of reference books, everyone knew what they were buying.

But there were points of friction. First, you needed access to references books. Even when you had, you still needed to know where to look, which pages and theories to cross-reference, and then manually copy the solution. The effort was still there, and you might absorb something via osmosis during that.

ChatGPT has removed that friction and compressed entirely. For our publishers, AI just ate their lunch, dinner, breakfast, a second breakfast, and elevenses.

A brief came into our studio from exactly this kind of publisher. The answer was not to make their product smarter. Stripped to its essence, the problem statement was: what do we do now?

The wrong answer to the right question

The obvious way of adding an AI to existing edutech product would be to add AI in a standard capacity: with a sprinkle of personalizations, improved search, recommendations, and a conversation bot that explains things in plainer language. That makes the management happy, shareholders rush to boost the stock prices, and voila.

Data has now started to show that this would be an utterly wrong move. A study involving nearly a thousand high school students found that those who used ChatGPT as a study aid did worse on subsequent tests than those who studied without it. Students with AI assistance thought they had done significantly better on the test even though they had not. This was described as an autopilot problem: the more you shy away from the cognitive work, the less practiced and adept your mental muscles become at that task.

Making an AI similar to ChatGPT does not solve the cognitive problem, but merely accelerates it.

We have to reframe the problem and turn it on its head: how might we design AI do the opposite?

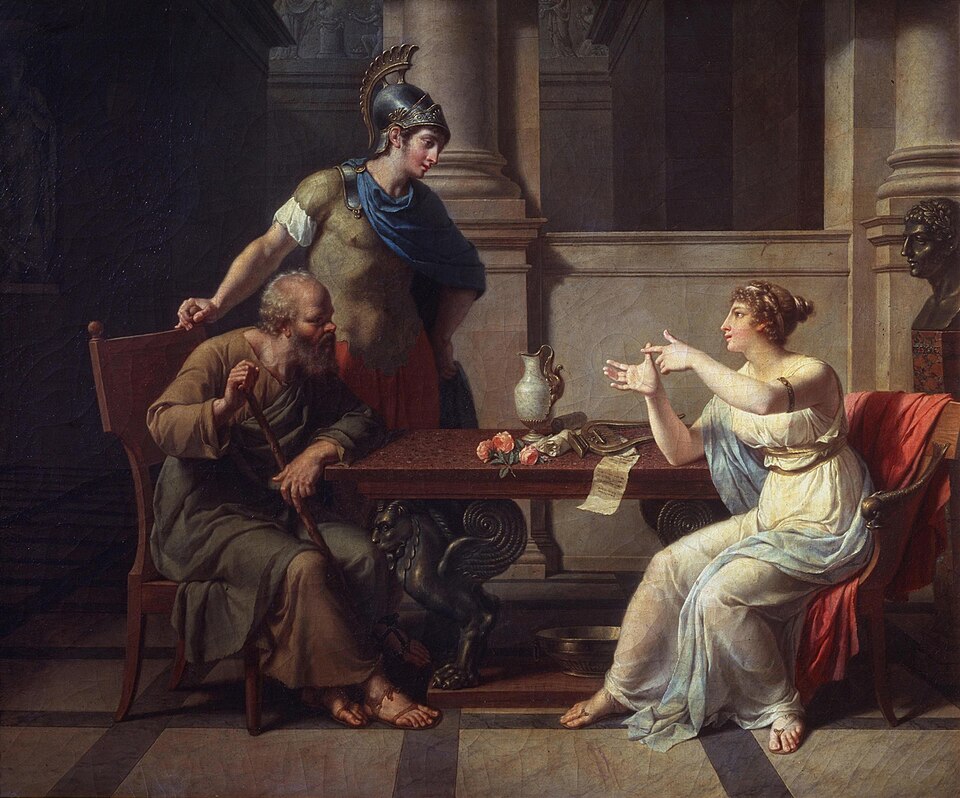

Socratic Emergence

Socratic method predates homework and assignments. Socrates asked pointed and specific questions that forced others to examine their ideas about what they knew and what they assumed. It made them identify the gaps, and within this struggle, they then found their way towards the answers.

An anti-solution AI, built around this principle, behaves quite differently from ChatGPT, Gemini, what have you. It does not respond to a question with an answer, but leads with another question specifically intended to get students closer to understanding the concept without short-circuiting the process of comprehension. It probes into what the student has attempted at and picked up so far, without giving away the solution.

We call this principle Socratic Emergence. It does not deliver understanding in one huge wall of text that no one would read, but reveals it in layers through exchange and at the student’s own pace, through their own reasoning and research. The Socratic AI makes the student earn the answer as a reward for grasping the concept first.

You can prompt a general purpose AI to “ask questions.” OpenAI has a study mode that attempts exactly this, but by their own admission, the system instructions are leaky with inconsistent behaviour. Socratic Emergence is architecturally different behaviour that does not leave loopholes that determined and seasoned GPT users can exploit to get to answers. The Socratic constraint prevents the answer from becoming available no matter how the question is framed, and it produces genuinely different experience. The guardrails are an architectural choice.

Example conversation

Student: Why did the Mauryan empire fall after Ashoka?

AI: Before we get there — what do you know about how the empire was doing during Ashoka’s reign?

[Give me a hint] [I’m not sure where to start]

Student: Strong I think. He was a big emperor and conquered a lot.

AI: He did. But after Kalinga, Ashoka made a significant shift. Do you remember what changed about how he ruled?

[Give me a hint] [I don’t remember this part]

Student: I don’t remember this part.

AI: That’s okay. Here’s a stepping stone: Ashoka became deeply committed to Buddhism after the Kalinga war and renounced violence. What do you think that meant for how he ran the empire day to day?

[Give me a hint] [I’m completely lost]

Student: He stopped fighting wars?

AI: Exactly. Now think about this — if you’re running a large empire and you stop expanding, what happens to your army?

[Give me a hint] [I’m completely lost] [Let me try a different angle]

Student: I’m completely lost.

AI: Let’s make it concrete. An army of 50,000 soldiers still needs to be paid and fed every month. But if there are no wars, no new territories, no new wealth coming in — what’s the problem the emperor now has?

[Give me a hint] [I’m completely lost] [Let me try a different angle]

Student: You have to keep spending money but nothing is coming in?

AI: Right. So where does the money have to come from instead?

[Give me a hint] [I’m completely lost] [Let me try a different angle]

Student: Taxes?

AI: And what happens to the people paying those taxes when they keep going up?

[Give me a hint] [I’m completely lost] [Let me try a different angle]

Student: They get angry.

AI: So you now have an expensive army with nothing to do, rising taxes, and unhappy people. What do you think a regional governor — someone ruling a far corner of the empire — might start thinking at this point?

[Give me a hint] [I’m completely lost]

Student: That he could just... be on his own?

AI: What would stop him?

[Give me a hint] [I’m completely lost]

Student: The emperor? But if the emperor after Ashoka was weak...

AI: Now you’re getting somewhere. Put it together in your own words — why did the empire weaken?

[Give me a hint] [I’m completely lost]

Student: Because after Ashoka stopped conquering, there was less money coming in, taxes went up, people were unhappy, and the governors in far places started breaking away?

AI: That’s a solid answer. And you got there yourself.

[I want to go deeper] [Test me on this] [Move to the next topic]

The time is now

Products attempting this exist: Khanmigo, MathGPT, Socra among them. But they are sitting mostly at the edges with their focus on specific higher education subjects in English-language markets. The opportunity is huge outside of those niches. Yet, an established edtech product with existing content depth, curriculum structure, and user trust has not built this as their core model.

As student are using AI more and more to get help on their homework, they are self-aware about the harms. The RAND survey mentioned before notes that 67% students are worried about the usage of AI eroding their ability to think critically.

For edtech (including our aforementioned publishers and their peers), this is the right kind of pivot waiting to be executed. They are already sitting on the content, the curriculum, the structure, and the expertise to make this happen. The book isn’t replaced, but it turns into the foundation of a complementary layer that responds, probes, and adapt to individual needs and specific points of confusion.

We think AI interaction patterns are as important as AI capabilities. If you’re building in this space, we’d like to talk.

Cover image: Nicolas Monsiaux, Public domain, via Wikimedia Commons

3 / S C

3 / S C